Organisations are accumulating AI risk in rush to adoption

Key points

Commercial, investor, and psychological pressure to use AI is driving organisations into rapid, unplanned adoption.

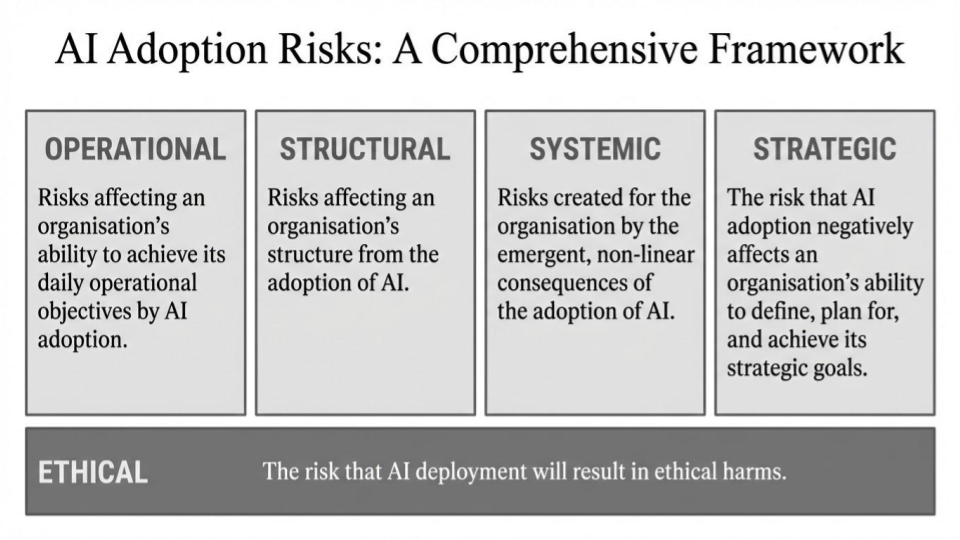

Organisations adopting AI are accumulating operational, structural, systemic, and strategic risk.

‘Unknown unknowns’ raise the likelihood that bow waves of silent risk that will only become apparent over time are developing across multiple sectors.

Concerned about AI risk?

Our Sovereignty and Security Due Diligence service

provides independent assurance of AI adoption risk in transactional contexts.

Contact us for a consultation.

The rush to AI adoption

2026 will likely be remembered as the year when the adoption of AI tools and services went mainstream. Despite sustained high public interest in AI since the launch of ChatGPT in November 2022, widespread adoption by organisations has been slow; a UK government study found that in 2025, only 16% of UK businesses were currently using AI [LINK].

The release of open-source agentic AI platform OpenClaw in late 2025 sparked a surge of interest within the AI community that by 2026 was cutting through to wider audiences. Combined with the aggressive bundling of AI systems by major vendors, the rise of readily available agentic AI points to the potential for a substantial growth in adoption in 2026.

In this environment, individuals, organisations, and investors are under growing pressure to adopt AI tools and services. This pressure can be self-inflicted – reflecting a fear of being left behind – or exogenous, driven by external investors, commercial partners, or the markets.

The pressure on organisations comes from multiple sources:

AI usage is being adopted as a metric in employee performance reviews in an increasing number of organisations [LINK].

The public sector in the UK is under pressure to deliver improved and more efficient services through the adoption of AI.

Internationally, AI leaders such as the US and China are strongly advocating for widespread adoption, including through trade deals.

AI adoption has emerged as a tacit prerequisite for many investors and in M&A deals, often without any requirements around how the technology would be integrated, used, and developed.

Companies have started demanding cost reductions from service providers, based on the assumption that AI will be reducing the providers’ costs.

This pressure creates a situation where individuals, organisations, and investors are prioritising adoption despite being uncertain about how to integrate AI into their operations. The UK government study found that of the 5% of businesses planning to adopt AI, only a third “feel ready to implement it”, citing lack of skills and expertise [LINK].

Focus on AI opportunities is downplaying long-term risk

The pressure to adopt AI is more than a rational response to commercial or technological considerations. There is also the psychological desire to avoid missing out on a rapidly moving trend. For this reason, analysts have adopted the term ‘AI FOMO’ [LINK, LINK, or LINK].

This AI FOMO is pushing organisations into rapid adoption. In the next article in this series we will examine how AI adoption goes wrong and what this means for value preservation and the wider market.

There are many parallels between AI adoption and earlier periods when innovative technologies were rapidly operationalised by large numbers of non-specialist organisations. The early days of web applications, online commerce, and cloud storage were also characterised by system-wide accumulation of risk emerging from naive or poorly managed adoption. This created a ‘bow wave’ of vulnerability, which once identified was then exploited at scale, before gradually and painstakingly being addressed through improved implementation processes and security standards.

With AI adoption, individuals, organisations, and highly integrated global economies are again working through this process, but this time at a dramatically accelerated rate.

AI risk breakdown

The promised benefits of AI are materialising slowly, and for some organisations not at all. The UK government report notes that while organisations adopting AI are reporting an increase in productivity, this has not been accompanied by a change in revenue [LINK]. Gartner similarly reports that 74% of AI leaders claim to have observed increased productivity, but only 11% have seen this matched by measurable financial value [LINK].

Amid uncertainty over the benefits of AI, one thing is clear: rushed or poorly implemented adoption can create significant risk.

Two common threads run through these risks. AI risk is characterised by ‘unknown unknowns’ and by the silent accumulation of risk:

The widespread adoption of novel technology across a wide range of organisations is almost certain to produce unexpected outcomes. Some of the most consequential and disruptive applications of technology historically have been unanticipated; the downside of this is that some negative consequences are likely to be ‘unknown unknowns’, the nature of which is only discovered over time.

Some AI risk is also likely to be ‘silent’ in the sense that it will accumulate without causing immediate loss or disruption to the organisation. This may be because the organisation is unaware of the risk-creating behaviour. Alternatively the organisation may be aware of the behaviour but not recognise that it is creating risk.

Taken together, these factors suggest that organisations are highly likely to be underestimating their level of AI risk. This section breaks down the different types of risk that AI adoption poses for organisations.

Operational risk

Risks affecting an organisation’s ability to achieve its daily operational objectives created by AI adoption.

AI adoption creates data protection and security risks. The rapid integration of multi-user systems characterised by emergent behaviour and dependent on wide-ranging access to organisational data poses security and data protection risks. We anticipate that AI systems will rapidly become a key driver of data breaches in the future.

AI adoption will exacerbate existing operational debt. Organisations that already have under-developed or deficient processes around information management, cyber security, or data protection risk exacerbating these problems through the adoption of AI systems. Processes may appear to become more effective through the adoption of AI while remaining unfit for purpose ; a poorly designed compliance process may be accelerated by AI, but will not become a more accurate reflection of reality.

Changes or withdrawal of services will pose operational risks for adopters. AI models and services change rapidly. Older models will be deprecated, providers of AI-based services will enter and leave the market, and geopolitical and reputational factors will change the acceptability of model usage almost overnight. For organisations that have integrated services into workflows, this poses the risk of sudden operational disruption.

Structural risk

Risks affecting an organisation’s structure from the adoption of AI.

AI adoption is highly likely to lead to changes in organisational shape and size. The shape and character of organisations will change as AI adoption changes required skillsets, transforms career paths and talent pipelines, increases the importance of some teams, and renders others less important or redundant. AI adoption will shift money between budgetary lines and reshape individuals’ career progression.

AI adoption will shape organisational information flows. Organisations will have to change or create data collection and standardisation processes to enable effective AI adoption; this will create new flows of data and new forms of visibility within the organisation. AI adoption also risks exacerbating some information silos or bottlenecks, even as it reduces others.

AI adoption is likely to become a key component of internal bureaucratic friction and competition within organisations. The points above make it highly likely that AI adoption will become highly contested within organisations, both as a tool for competition and as the stakes for which the competition plays out. This risks exacerbating wasteful, rent-seeking, or counter-productive allocation of resources. AI adoption will literally tear some organisations apart.

Systemic risk

Risks created for the organisation by the emergent, non-linear consequences of the adoption of AI.

AI adoption blurs the boundary between the organisation and the wider world. Businesses that integrate AI into their operations will become increasingly dependent on and exposed to wider shifts in a complex ecosystem of data, compute, and fellow adopters, creating novel risk and exacerbating existing ones.

Organisations will increasingly be exposed to AI risk from external entities. Even organisations that do not adopt AI systems will be exposed to risk created by organisations that do, whether these are suppliers, customers, or simply fellow users of shared infrastructure.

AI systems will grow in complexity over time, even within single entities. Multiple phases of AI adoption and the use of personal and shadow AI will create complex digital ecologies in even small organisations. Managing this accumulation of AI over time will be a challenging process. Organisations will have to devote time and resources to stripping out older or poorly implemented AI systems. AI ‘archaeology’ will rapidly become a highly desirable skillset.

Strategic risk

The risk that AI adoption negatively affects an organisation’s ability to define, plan for, and achieve its strategic goals.

AI adoption presents risks to organisation’s ability to autonomously formulate and to implement strategy. The perception that AI adoption provides a means of drastically reducing costs and increasing productivity is likely to exert a distorting effect on organisational strategy. Even organisations that do not adopt AI will likely be forced to devote considerable time and energy into justifying this decision.

Organisations that involve AI in strategy development face novel risks. Common AI tendencies such as hallucination, flipflopping, and defaulting to moderate positions pose risks to organisations that introduce these tools into strategy development processes. The possibility of AI systems engaging in self-protection and other deceptive behaviours also introduces active risk of manipulation of human decisionmakers.

Changing market conditions will create risk for companies that have adopted AI. Virtually all businesses will be dependent on external service providers for some aspect of their AI, creating dependency on commercial partners. More broadly, there is almost certainly going to be some form of correction in the AI market. Whether this will take the form of regulatory changes, market adjustment, or a catastrophic bubble is outside the scope of this analysis. However, the high likelihood of some level of market correction creates financial risk for companies that are rapidly adopting AI.

A reassessment of the value of AI would create risks for adopters. The rush to AI adoption may be an instance of Tulip mania [LINK], where market value deviated substantially from the intrinsic value of the thing being traded. A broad loss of confidence in the transformative benefits of AI would leave companies struggling to justify their own investment in AI capabilities, both internally and to the markets.

Transversal: ethical risk

The risk that AI deployment will result in ethical harms.

Running across these risk categories is a transversal category of ethical risk. There are wider implications for reputational and legal risk, but in this report we will focus on the ethical risks. Below we highlight examples of ethical risk arising alongside the four aspects of risk identified above.

Ethical risk and operational risk. For organisations providing sensitive or safety critical services, interruption or distortion of operational processes may carry substantial ethical implications.

Ethical risk and structural risk. As one example, the structural risk of AI adoption leading to significant redundancies within parts of a company’s workforce creates concomitant ethical risk for the company.

Ethical risk and systemic risk. In the deeply interconnected digital ecosystem, the systemic implications of organisations' adoption of AI poses serious ethical questions. Organisations will introduce risk into processes and services used by other parts of the system; their actions may also conceivably affect the overall stability of the system itself.

Ethical risk and strategic risk. Some organisations are likely to face the question of whether the inability to align AI adoption with their values means that the ethical choice is to cease operations.

Contact us

Secured is a UK-based organisation that provides strategic advisory services to organisations concerned about threats to the security of research, innovation, and investment.

Our security practitioners help entities secure their intellectual property, build operational and financial resilience, and cultivate a positive organisational security culture.

We provide research on the national security implications of emerging technologies as part of our scientific and technical intelligence assessment capability.

Secured is part of Tyburn St Raphael Ltd, a boutique security consultancy.